OVHcloud Deployment

Deploy on OVHcloud Managed Kubernetes with European data sovereignty and AI Endpoints

Intermediate

1 - 3 hr

- OVHcloud account with API access (see below for setup)

- Pulumi installed locally

- kubectl command-line tool

- Python 3.11+ for CLI tools

- Basic command-line and Kubernetes familiarity

Deploy a production-ready TrustGraph environment on OVHcloud Managed Kubernetes with European data sovereignty using Infrastructure as Code.

Overview

This guide walks you through deploying TrustGraph on OVHcloud’s Managed Kubernetes Service (MKS) using Pulumi (Infrastructure as Code). The deployment automatically provisions a production-ready Kubernetes cluster integrated with OVHcloud’s AI Endpoints.

Pulumi is an open-source Infrastructure as Code tool that uses general-purpose programming languages (TypeScript/JavaScript in this case) to define cloud infrastructure. Unlike manual deployments, Pulumi provides:

- Reproducible, version-controlled infrastructure

- Testable and retryable deployments

- Automatic resource dependency management

- Simple rollback capabilities

Once deployed, you’ll have a complete TrustGraph stack running on OVHcloud infrastructure with:

- Managed Kubernetes cluster (2-node pool, configurable)

- OVHcloud AI Endpoints integration (Mistral Nemo Instruct)

- Complete monitoring with Grafana and Prometheus

- Web workbench for document processing and Graph RAG

- Secure secrets management

Why OVHcloud for TrustGraph?

OVHcloud offers unique advantages for global organizations:

- European Cloud Leader: Largest European cloud provider with 40+ data centers worldwide

- No Egress Fees: Unlimited outbound traffic included at no extra cost

- GDPR Native: Built-in compliance with European data protection standards

- Transparent Pricing: Predictable costs without hidden charges

- Anti-DDoS Included: Enterprise-grade protection at no extra cost

Ideal for organizations requiring European data sovereignty with global reach.

Getting ready

OVHcloud Account

You’ll need an OVHcloud account with API access. If you don’t have one:

- Sign up at https://www.ovh.com/

- Complete account verification

- Access the OVHcloud Control Panel

To create API credentials:

- Navigate to the OVHcloud API token creation page

- Fill in the form with:

- Application name: TrustGraph Deployment

- Application description: Pulumi deployment for TrustGraph

- Validity: Choose appropriate duration (or unlimited)

- Rights: Grant full access or specific rights for Kubernetes and AI services

- Click Create keys

- Save the Application Key, Application Secret, and Consumer Key securely

Python

You need Python 3.11 or later installed for the TrustGraph CLI tools.

Check your Python version

python3 --version

If you need to install or upgrade Python, visit python.org.

Pulumi

Install Pulumi on your local machine:

Linux

curl -fsSL https://get.pulumi.com | sh

MacOS

brew install pulumi/tap/pulumi

Windows

Download the installer from pulumi.com.

Verify installation:

pulumi version

Full installation details are at pulumi.com.

kubectl

Install kubectl to manage your Kubernetes cluster:

- Linux: Install kubectl on Linux

- MacOS:

brew install kubectl - Windows: Install kubectl on Windows

Verify installation:

kubectl version --client

Node.js

The Pulumi deployment code uses TypeScript/JavaScript, so you’ll need Node.js installed:

- Download: nodejs.org (LTS version recommended)

- Linux:

sudo apt install nodejs npm(Ubuntu/Debian) orsudo dnf install nodejs(Fedora) - MacOS:

brew install node

Verify installation:

node --version

npm --version

OVHcloud AI Endpoints

The deployment uses OVHcloud’s AI Endpoints service with Mistral Nemo Instruct as the default model. You’ll need to:

- Access OVHcloud AI Endpoints in the Control Panel

- Generate an AI Endpoints token for authentication

- Note the token for configuration later

OVHcloud AI Endpoints provides access to various AI models including Mistral, LLaMA 3, and Codestral, with processing available in European data centers.

Prepare the deployment

Get the Pulumi code

Clone the TrustGraph OVHcloud Pulumi repository:

git clone https://github.com/trustgraph-ai/pulumi-trustgraph-ovhcloud.git

cd pulumi-trustgraph-ovhcloud/pulumi

Install dependencies

Install the Node.js dependencies for the Pulumi project:

npm install

Configure OVHcloud credentials

Set the required OVHcloud environment variables using the credentials you created earlier:

export OVH_ENDPOINT=ovh-eu # or ovh-ca, ovh-us based on your region

export OVH_APPLICATION_KEY="your_application_key_here"

export OVH_APPLICATION_SECRET="your_application_secret_here"

export OVH_CONSUMER_KEY="your_consumer_key_here"

Configure Pulumi state

You need to tell Pulumi which state to use. You can store this in an S3 bucket, but for experimentation, you can just use local state:

pulumi login --local

When storing secrets in the Pulumi state, pulumi uses a secret passphrase to encrypt secrets. When using Pulumi in a production or shared environment you would have to evaluate the security arrangements around secrets.

We’re just going to set this to the empty string, assuming that no encryption is fine for a development deploy.

export PULUMI_CONFIG_PASSPHRASE=

Create a Pulumi stack

Initialize a new Pulumi stack for your deployment:

pulumi stack init dev

You can use any name instead of dev - this helps you manage multiple deployments (dev, staging, prod, etc.).

Configure the stack

Apply settings for region, service name, and AI token. The service name is used to construct resource names:

pulumi config set ovhcloud:region GRA11 # or other available region

pulumi config set serviceName trustgraph-prod

pulumi config set --secret aiEndpointsToken your_ai_endpoints_token_here

Available regions include:

GRA11(Gravelines, France)SBG5(Strasbourg, France)BHS5(Beauharnois, Canada)DE1(Frankfurt, Germany)WAW1(Warsaw, Poland)

Refer to the repository’s README for more region options and configuration details.

Deploy with Pulumi

Preview the deployment

Before deploying, preview what Pulumi will create:

pulumi preview

This shows all the resources that will be created:

- Managed Kubernetes cluster

- Node pool with specified instance types

- Private network configuration

- Service account with AI Endpoints access

- Kubernetes secrets for API keys and configuration

- TrustGraph deployments, services, and config maps

Review the output to ensure everything looks correct.

Deploy the infrastructure

Deploy the complete TrustGraph stack:

pulumi up

Pulumi will ask for confirmation before proceeding. Type yes to continue.

The deployment typically takes 8 - 12 minutes and progresses through these stages:

- Creating Kubernetes cluster (5-7 minutes)

- Provisions Managed Kubernetes cluster

- Creates node pool

- Configures networking

- Configuring service account and secrets (1-2 minutes)

- Creates service account

- Sets up AI Endpoints access

- Creates Kubernetes secrets

- Deploying TrustGraph (4-6 minutes)

- Applies Kubernetes manifests

- Deploys all TrustGraph services

- Starts pods and initializes services

You’ll see output showing the creation progress of all resources.

Configure and verify kubectl access

After deployment completes, a configuration file permitting access to the Kubernetes cluster is written to kubeconfig.yaml. This file should be treated as a secret as it contains access keys for the Kubernetes cluster.

Check you can access the cluster:

export KUBECONFIG=$(pwd)/kubeconfig.yaml

# Verify access

kubectl get nodes

You should see your OVHcloud Managed Kubernetes nodes listed as Ready.

Check pod status

Verify that all pods are running:

kubectl -n trustgraph get pods

You should see output similar to this (pod names will have different random suffixes):

NAME READY STATUS RESTARTS AGE

agent-manager-74fbb8b64-nzlwb 1/1 Running 0 5m

api-gateway-b6848c6bb-nqtdm 1/1 Running 0 5m

cassandra-6765fff974-pbh65 1/1 Running 0 5m

pulsar-d85499879-x92qv 1/1 Running 0 5m

text-completion-58ccf95586-6gkff 1/1 Running 0 5m

workbench-ui-5fc6d59899-8rczf 1/1 Running 0 5m

...

All pods should show Running status. Some init pods (names ending in -init) may fail or be shown Completed status - this is normal, their job is to initialise cluster resources and then exit.

Access services via port-forwarding

Since the Kubernetes cluster is running on Scaleway, you’ll need to set up port-forwarding to access TrustGraph services from your local machine.

Open three separate terminal windows and run these commands (keep them running):

Terminal 1 - API Gateway:

export KUBECONFIG=$(pwd)/kubeconfig.yaml

kubectl -n trustgraph port-forward svc/api-gateway 8088:8088

Terminal 2 - Workbench UI:

export KUBECONFIG=$(pwd)/kubeconfig.yaml

kubectl -n trustgraph port-forward svc/workbench-ui 8888:8888

Terminal 3 - Grafana:

export KUBECONFIG=$(pwd)/kubeconfig.yaml

kubectl -n trustgraph port-forward svc/grafana 3000:3000

With these port-forwards running, you can access:

- TrustGraph API: http://localhost:8088

- Web Workbench: http://localhost:8888

- Grafana Monitoring: http://localhost:3000

Keep these terminal windows open while you’re working with TrustGraph. If you close them, you’ll lose access to the services.

Install CLI tools

Now install the TrustGraph command-line tools. These tools help you interact with TrustGraph, load documents, and verify the system.

Create a Python virtual environment and install the CLI:

python3 -m venv env

source env/bin/activate # On Windows: env\Scripts\activate

pip install trustgraph-cli

Startup period

It can take 2-3 minutes for all services to stabilize after deployment. Services like Pulsar and Cassandra need time to initialize properly.

Verify system health

tg-verify-system-status

If everything is working, the output looks something like this:

============================================================

TrustGraph System Status Verification

============================================================

Phase 1: Infrastructure

------------------------------------------------------------

[00:00] ⏳ Checking Pulsar...

[00:03] ⏳ Checking Pulsar... (attempt 2)

[00:03] ✓ Pulsar: Pulsar healthy (0 cluster(s))

[00:03] ⏳ Checking API Gateway...

[00:03] ✓ API Gateway: API Gateway is responding

Phase 2: Core Services

------------------------------------------------------------

[00:03] ⏳ Checking Processors...

[00:03] ✓ Processors: Found 34 processors (≥ 15)

[00:03] ⏳ Checking Flow Classes...

[00:06] ⏳ Checking Flow Classes... (attempt 2)

[00:09] ⏳ Checking Flow Classes... (attempt 3)

[00:22] ⏳ Checking Flow Classes... (attempt 4)

[00:35] ⏳ Checking Flow Classes... (attempt 5)

[00:38] ⏳ Checking Flow Classes... (attempt 6)

[00:38] ✓ Flow Classes: Found 9 flow class(es)

[00:38] ⏳ Checking Flows...

[00:38] ✓ Flows: Flow manager responding (1 flow(s))

[00:38] ⏳ Checking Prompts...

[00:38] ✓ Prompts: Found 16 prompt(s)

Phase 3: Data Services

------------------------------------------------------------

[00:38] ⏳ Checking Library...

[00:38] ✓ Library: Library responding (0 document(s))

Phase 4: User Interface

------------------------------------------------------------

[00:38] ⏳ Checking Workbench UI...

[00:38] ✓ Workbench UI: Workbench UI is responding

============================================================

Summary

============================================================

Checks passed: 8/8

Checks failed: 0/8

Total time: 00:38

✓ System is healthy!

The Checks failed line is the most interesting and is hopefully zero. If you are having issues, look at the troubleshooting section later.

If everything appears to be working, the following parts of the deployment guide are a whistle-stop tour through various parts of the system.

Test LLM access

Test that OVHcloud AI Endpoints integration is working by invoking the LLM through the gateway:

tg-invoke-llm 'Be helpful' 'What is 2 + 2?'

You should see output like:

2 + 2 = 4

This confirms that TrustGraph can successfully communicate with OVHcloud’s AI Endpoints service.

Load sample documents

Load a small set of sample documents into the library for testing:

tg-load-sample-documents

This downloads documents from the internet and caches them locally. The download can take a little time to run.

Workbench

TrustGraph includes a web interface for document processing and Graph RAG.

Access the TrustGraph workbench at http://localhost:8888 (requires port-forwarding to be running).

By default, there are no credentials.

You should be able to navigate to the Flows tab and see a single default flow running. The guide will return to the workbench to load a document.

Monitoring dashboard

Access Grafana monitoring at http://localhost:3000 (requires port-forwarding to be running).

Default credentials:

- Username:

admin - Password:

admin

All TrustGraph components collect metrics using Prometheus and make these available using this Grafana workbench. The Grafana deployment is configured with 2 dashboards:

- Overview metrics dashboard: Shows processing metrics

- Logs dashboard: Shows collated TrustGraph container logs

For a newly launched system, the metrics won’t be particularly interesting yet.

Check the LLM is working

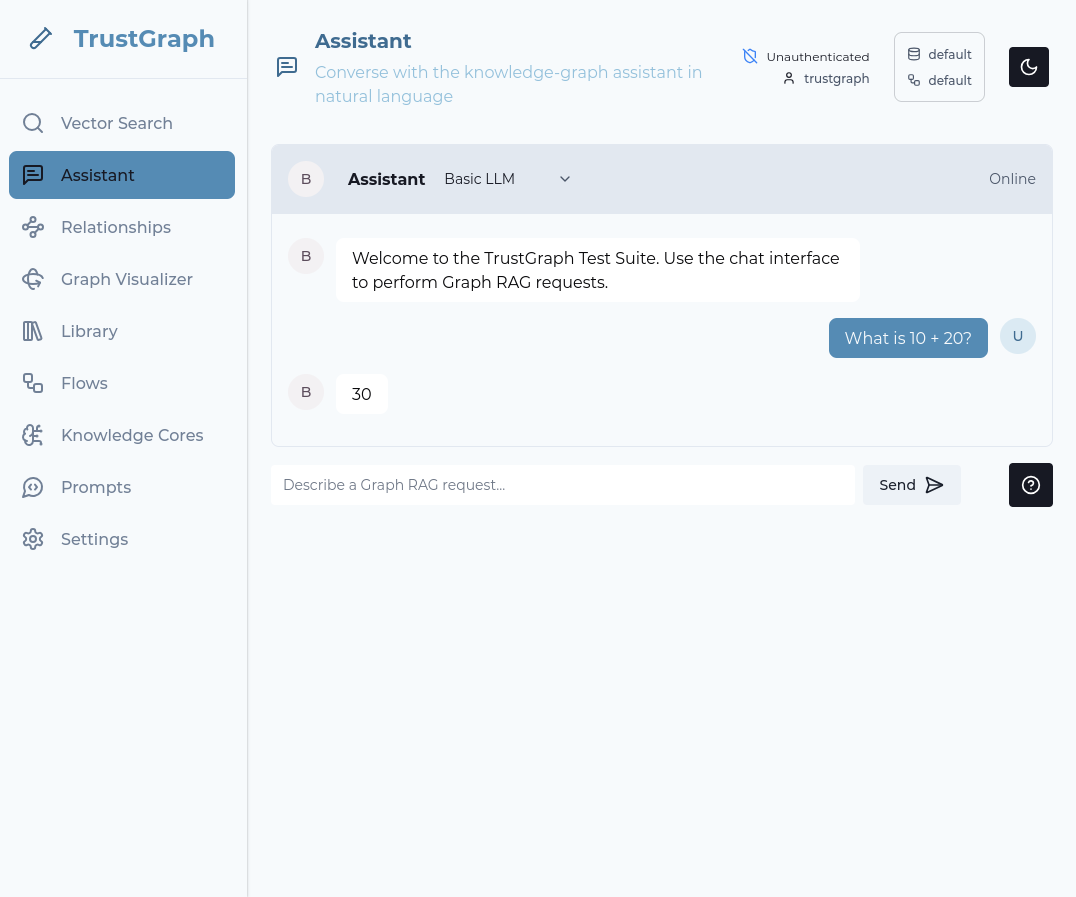

Back in the workbench, select the Assistant tab.

In the top line next to the Assistant word, change the mode to Basic LLM.

Enter a question in the prompt box at the bottom of the tab and press Send. If everything works, after a short period you should see a response to your query.

If LLM interactions are not working, check the Grafana logs dashboard for errors in the text-completion service.

Working with a document

Load a document

Back in the workbench:

- Navigate to the Library page

- In the upper right-hand corner, there is a dark/light mode widget. To its left is a selector widget. Ensure the top and bottom lines say “default”. If not, click on the widget and change.

- On the library tab, select a document (e.g., “Beyond State Vigilance”)

- Click Submit on the action bar

- Choose a processing flow (use Default processing flow)

- Click Submit to process

Beyond State Vigilance is a relatively short document, so it’s a good one to start with.

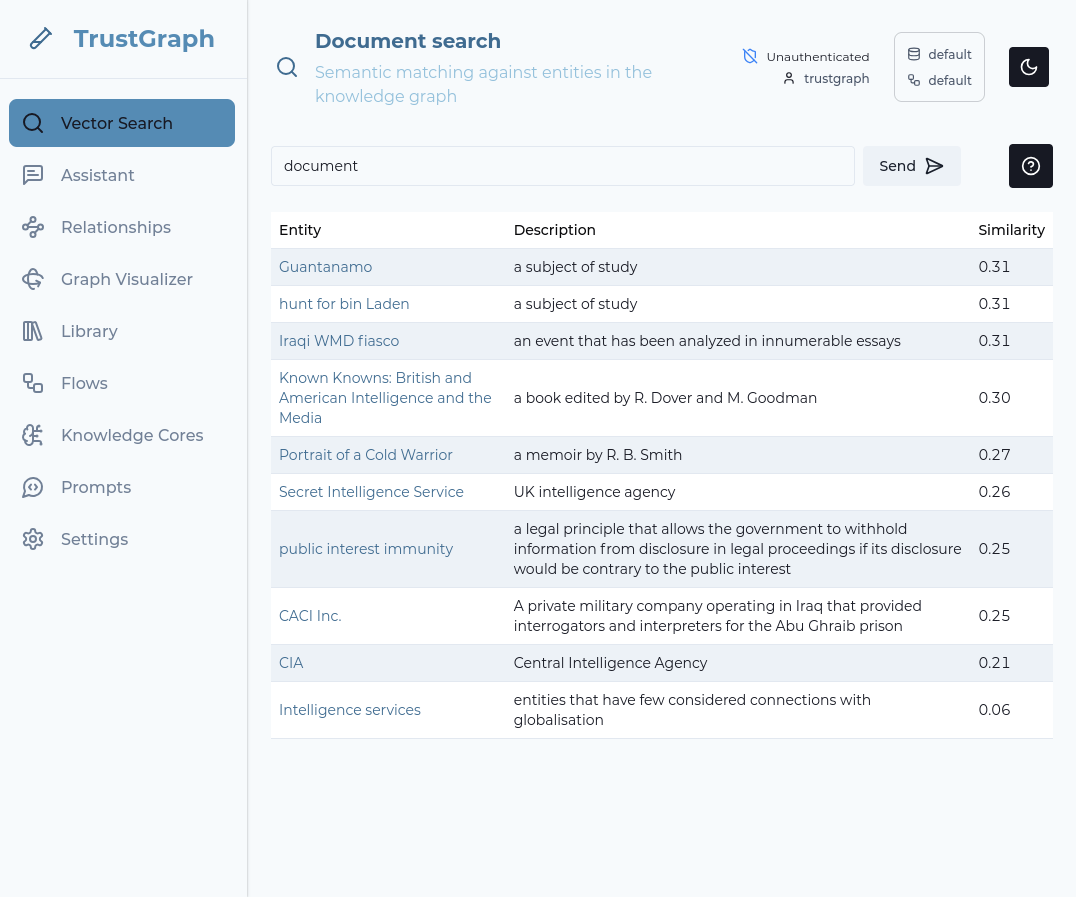

Use Vector search

Select the Vector Search tab. Enter a string (e.g., “document”) in the search bar and hit RETURN. The search term doesn’t matter a great deal. If information has started to load, you should see some search results.

The vector search attempts to find up to 10 terms which are the closest matches for your search term. It does this even if the search terms are not a strong match, so this is a simple way to observe whether data has loaded.

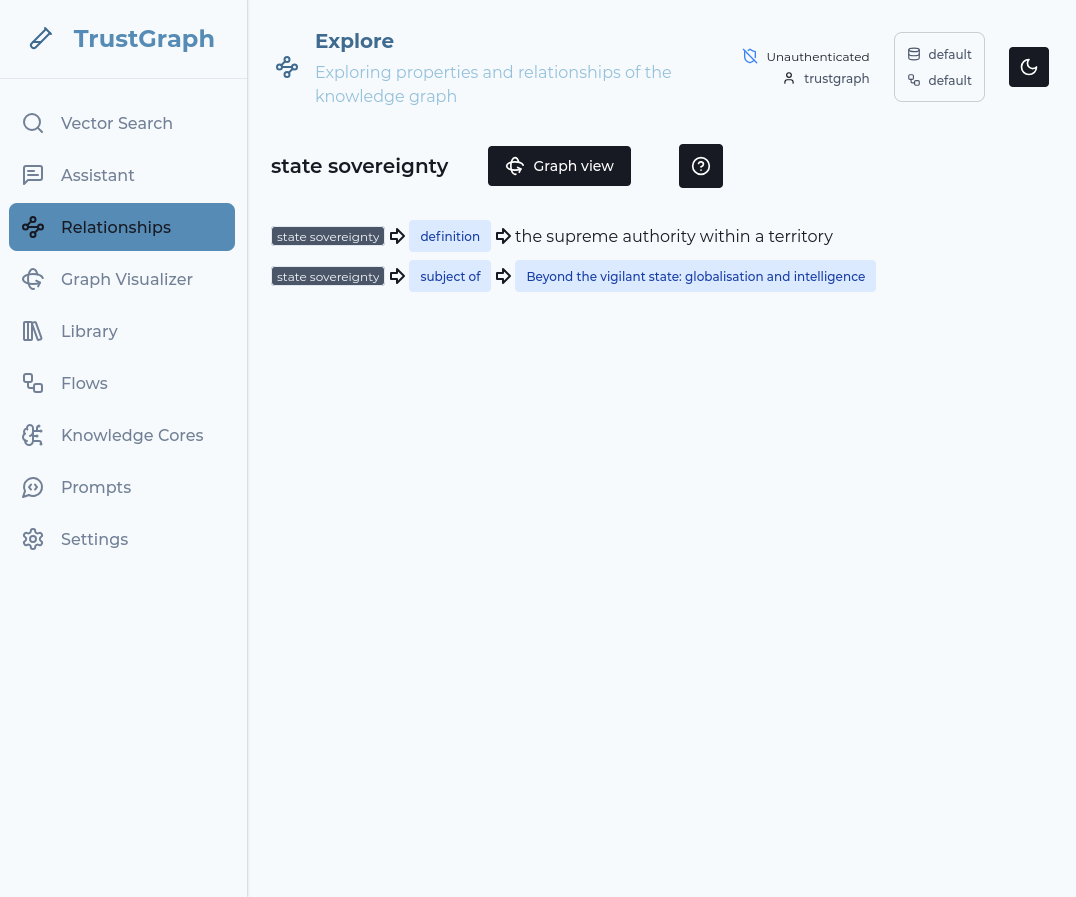

Look at knowledge graph

Click on one of the Vector Search result terms on the left-hand side. This shows relationships in the graph from the knowledge graph linking to that term.

You can then click on the Graph view button to go to a 3D view of the discovered relationships.

Query with Graph RAG

- Navigate to Assistant tab

- Change the Assistant mode to GraphRAG

- Enter your question (e.g., “What is this document about?”)

- You will see the answer to your question after a short period

Troubleshooting

Deployment Issues

Pulumi deployment fails

Diagnosis:

Check the Pulumi error output for specific failure messages. Common issues include:

# View detailed error information

pulumi stack --show-urns

pulumi logs

Resolution:

- Authentication errors: Verify your OVHcloud credentials are set correctly (

OVH_APPLICATION_KEY,OVH_APPLICATION_SECRET, etc.) - Quota limits: Check your OVHcloud account hasn’t hit resource quotas (Kubernetes clusters, nodes, etc.)

- Region availability: Ensure Managed Kubernetes is available in your selected region

- Permissions: Verify your API credentials have permissions to create Kubernetes clusters and access AI services

Pods stuck in Pending state

Diagnosis:

kubectl -n trustgraph get pods | grep Pending

kubectl -n trustgraph describe pod <pod-name>

Look for scheduling failures or resource constraints in the describe output.

Resolution:

- Insufficient resources: Increase node count or node type in your Pulumi configuration

- PersistentVolume issues: Check PV/PVC status with

kubectl -n trustgraph get pv,pvc - Node issues: Check node status with

kubectl get nodes

OVHcloud AI Endpoints integration not working

Diagnosis:

Test LLM connectivity:

tg-invoke-llm '' 'What is 2+2'

A timeout or error indicates AI Endpoints configuration issues. Check the text-completion pod logs:

kubectl -n trustgraph logs -l app=text-completion

Resolution:

- Verify OVHcloud AI Endpoints is enabled in your account

- Check that the AI Endpoints token is correct and has not expired

- Ensure the token secret was created correctly by Pulumi

- Review Pulumi outputs to confirm AI configuration:

pulumi stack output

Port-forwarding connection issues

Diagnosis:

Port-forward commands fail or connections time out.

Resolution:

- Verify

KUBECONFIGenvironment variable is set correctly - Check that the target service exists:

kubectl -n trustgraph get svc - Ensure no other process is using the port (e.g., port 8088, 8888, or 3000)

- Try restarting the port-forward with verbose logging:

kubectl port-forward -v=6 ...

Service Failure

Pods in CrashLoopBackOff

Diagnosis:

# Find crashing pods

kubectl -n trustgraph get pods | grep CrashLoopBackOff

# View logs from crashed container

kubectl -n trustgraph logs <pod-name> --previous

Resolution:

Check the logs to identify why the container is crashing. Common causes:

- Application errors (configuration issues)

- Missing dependencies (ensure all required services are running)

- Incorrect secrets or environment variables

- Resource limits too low

Service not responding

Diagnosis:

Check service and pod status:

kubectl -n trustgraph get svc

kubectl -n trustgraph get pods

kubectl -n trustgraph logs <pod-name>

Resolution:

- Verify the pod is running and ready

- Check pod logs for errors

- Ensure port-forwarding is active for the service

- Use

tg-verify-system-statusto check overall system health

Shutting down

Clean shutdown

When you’re finished with your TrustGraph deployment, clean up all resources:

pulumi destroy

Pulumi will show you all the resources that will be deleted and ask for confirmation. Type yes to proceed.

The destruction process typically takes 5-10 minutes and removes:

- All TrustGraph Kubernetes resources

- The Managed Kubernetes cluster

- Node pools

- Service accounts and API access

- All associated networking and storage

Cost Warning: OVHcloud charges for running Kubernetes clusters and nodes. Make sure to destroy your deployment when you’re not using it to avoid unnecessary costs.

Verify cleanup

After pulumi destroy completes, verify all resources are removed:

# Check Pulumi stack status

pulumi stack

# Verify no resources remain

pulumi stack --show-urns

You can also check the OVHcloud Control Panel to ensure the Managed Kubernetes cluster and associated resources are deleted.

Delete the Pulumi stack

If you’re completely done with this deployment, you can remove the Pulumi stack:

pulumi stack rm dev

This removes the stack’s state but doesn’t affect any cloud resources (use pulumi destroy first).

Next Steps

Now that you have TrustGraph running on OVHcloud:

- Guides: See Guides for things you can do with your running TrustGraph

- Scale the cluster: Modify your Pulumi configuration to add more nodes or change node types

- Integrate with OVHcloud services: Connect to Object Storage, databases, or other OVHcloud services

- Multi-region deployment: Deploy TrustGraph across multiple OVHcloud regions for high availability

- Production hardening: Review the GitHub repository for advanced configuration options

Additional Resources

For Pulumi-specific configuration details, customization options, and contributing to the deployment code, visit the TrustGraph OVHcloud Pulumi Repository